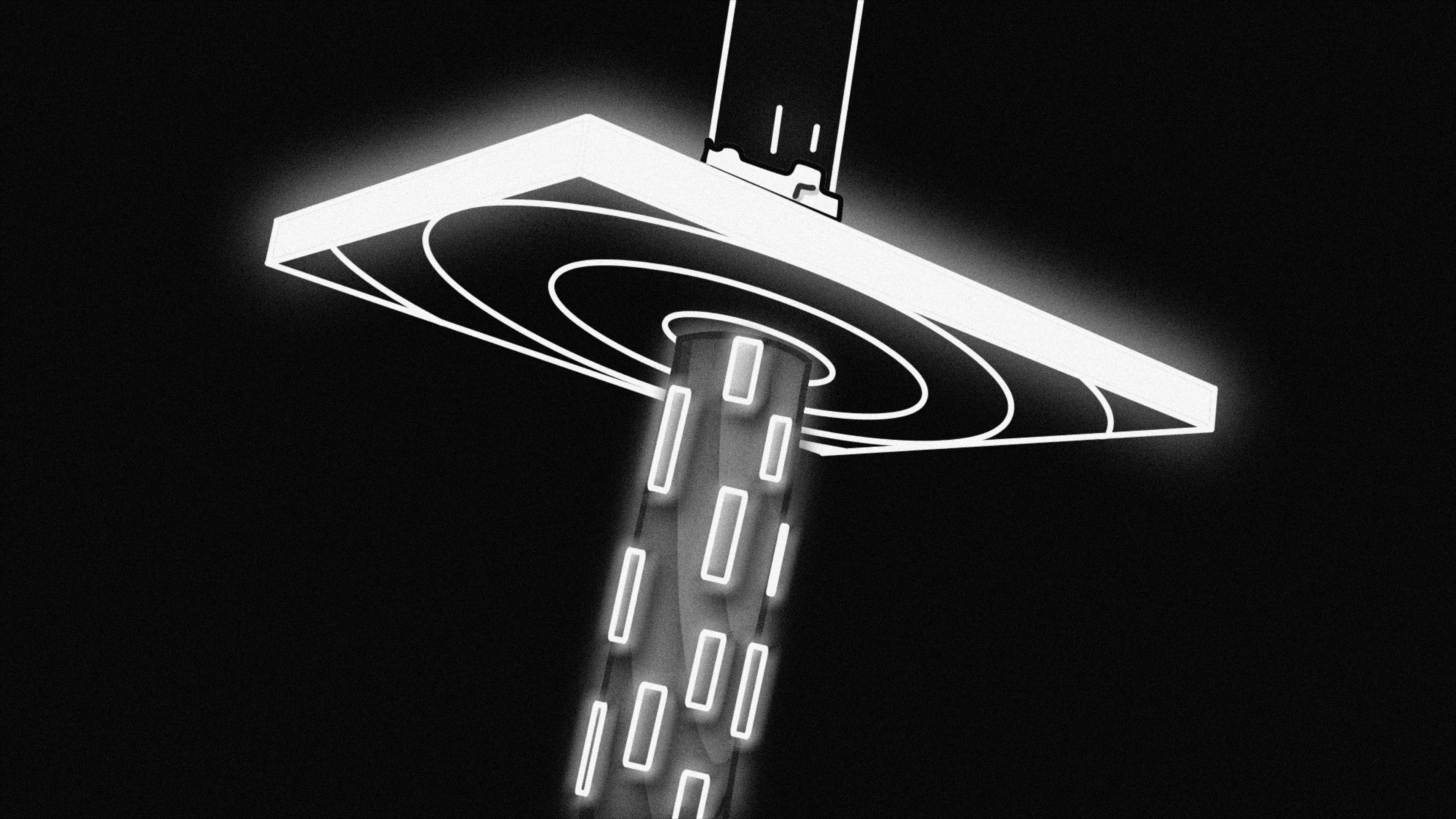

Andrej Karpathy coined the term in early 2025 and the name stuck immediately — because it described something people were already doing but hadn’t named. Vibe coding: you have a rough sense of what you want to build, you describe it to an AI, the AI writes the code, you iterate from there. You’re not writing every line. You’re mostly directing.

If that sounds like a shortcut, it is. It’s also, in certain contexts, a genuinely different and more effective way to build software. The interesting question isn’t whether it’s real — it is — but when it works, when it doesn’t, and what it changes about what it means to be an engineer.

What Vibe Coding Actually Looks Like

In practice, it’s a spectrum.

On one end: you open a chat interface, describe a feature in plain English, get a code block, paste it in, it mostly works, you tweak. This is the version most people mean when they say vibe coding. Low friction, fast results, good for prototyping and small tools.

On the other end: you’re using a tool like Claude Code that has full access to your repository. You describe what you want at a high level, the agent reads the relevant files, understands your existing patterns, writes targeted changes across multiple files, runs tests, and fixes failures — all in a loop you oversee but don’t manually execute. You’re still making decisions; you’re just not typing every line.

Both are “vibe coding” in spirit. The second is considerably more powerful, and it’s what I actually use day to day.

What It’s Good For

Prototyping and exploration. The fastest way to figure out if an idea is viable is often to build a rough version of it. Vibe coding collapses the time from “I want to try this” to “here’s something I can look at and react to.” When the goal is learning or validation — not production code — this is exactly the right trade-off.

Boilerplate and scaffolding. A huge amount of software development is writing code that’s predictable but tedious: CRUD endpoints, config files, test fixtures, migration scripts. These are well-understood patterns that AI models handle well. Delegating them frees your attention for the parts that actually require judgment.

Working in unfamiliar territory. Starting a new framework, a new language, or a new AWS service used to mean a lot of documentation time before you could write anything substantive. AI tools compress that curve significantly. You can describe what you want and let the agent produce a working starting point, then study it to understand the pattern.

Iteration speed. The feedback loop between “I want to change this” and “it’s changed” is dramatically shorter. For frontend work especially — where you’re reacting to how something looks and feels — the ability to describe a change and see it immediately is transformative.

Where It Falls Apart

Complex systems with real constraints. AI models are good at writing code that looks correct. They’re less reliable when the correctness depends on subtle system-level knowledge: memory management, concurrency semantics, specific database behaviors, security invariants. The output is plausible but needs careful review. The more the correctness is determined by things outside the prompt, the more cautious you need to be.

Code you don’t understand. This is the one that gets people into trouble. Vibe coding makes it easy to accumulate code that works until it doesn’t, and when it breaks, you’re debugging something you never fully understood. The technical debt is real. For anything you’ll maintain, you need to understand what was written — even if you didn’t write it.

Architectural decisions. “Build me a microservices system for this e-commerce app” will get you a plausible architecture. It won’t necessarily be the right one for your scale, your team’s operational capabilities, your latency requirements, or your budget. The AI doesn’t know your constraints unless you tell it — and knowing which constraints matter is the job of an engineer, not a model.

Security-sensitive code. Input validation, authentication flows, authorization logic, cryptographic operations — these are areas where “looks right” isn’t good enough. AI-generated security code needs the same (arguably more) scrutiny than hand-written code. The model will confidently produce something that has a subtle vulnerability.

The Skill That Doesn’t Change

Here’s what vibe coding doesn’t automate: knowing what to build.

The AI can write code faster than you can. It can’t tell you what the right feature is, whether the architecture will hold up at 10x scale, whether this is the right trade-off given your team’s operational burden, or whether the thing you’re building solves the actual problem. Those decisions require understanding the domain, the system, the team, and the users. They require engineering judgment.

What’s changed is the relationship between judgment and execution. Before AI tools, a lot of what defined a “good engineer” was execution speed — how quickly you could write code you’d already figured out. That gap is closing rapidly. The judgment part — knowing what to build and how to think about it — is becoming proportionally more valuable.

This is good for engineers who’ve been developing judgment. It’s uncomfortable for engineers whose value was primarily execution throughput.

What I Actually Do

This site was built almost entirely through vibe coding with Claude Code. Every component, every CSS system, every deployment script — described at a high level, implemented by the agent, reviewed and iterated by me.

My workflow:

- Describe what I want clearly, including constraints (accessibility, performance, existing patterns)

- Review the plan before any files change

- Read the diff — every change, not just skim it

- Test in the browser

- Iterate

The “review the diff” step is non-negotiable. It’s not just catching errors — it’s how I stay connected to the codebase. If I approved every change without reading it, I’d accumulate code I don’t understand, and the first time something breaks I’d have no mental model for debugging it.

For infrastructure work — CloudFront configs, IAM policies, S3 bucket settings — I’m even more deliberate. The agent proposes, I verify the command matches my intention, I approve. The speed benefit is still there; the oversight doesn’t disappear.

The Honest Assessment

Vibe coding is real, it’s useful, and it’s not going away. The models are getting better, the tools are getting more capable, and the feedback loops are getting tighter. Resisting it on principle is like refusing to use a compiler because “real engineers write machine code.”

But it’s also not magic. The engineers getting the most out of it are the ones who use it deliberately — clear about when to delegate and when to take the wheel, consistent about reviewing what gets written, honest about the limits of what they understand.

The vibe is a starting point, not an ending point. What you do with the output is still your job.